Projects

Flutter/Stutter

2016

Collaborators: Project led by Camille Baker and Kate Sicchio as part of Hacking the Body 2.0 along with Tara Baoth Mooney.

Documentation: Video of performance at ICLI in Bristol, UK, Paper in proceedings of ICLI 2016

Flutter/Stutter is an improvised dance performance mediated by the technologies that support the Internet of Things. A pair of dancers wear e-textile garments that tickle their necks to indicate when they should produce a dance movement. The 'tickle motors' are triggered by the textile touch sensors integrated into the other dancer's garment or by the choreographer off-stage from a laptop.

I designed and built the hardware sensing and actuating system along with the supporting networking software passing MQTT messages and the software front-end used by the choreographer.

Human Harp

2013 to 2015

Collaborators: Project led by Di Mainstone and has included Adam Stark, David Blair Ross, Seb Madgwick, John Nussey

Documentation: Human Harp Site, The Creators Project

The Human Harp is an ongoing research project and installation led by Di Mainstone. She was inspired by the Brooklyn Bridge and the movement of people. It is an ongoing piece currently in its third engineering iteration.

I have designed the sensors that track the movement of the string from the modules along with networking those modules with each other and the the software generating the audio. I am currently handing the system engineering.

Adventures in Arduino

Published by Wiley in May 2015

Documentation: Supporting site, Wiley site

Adventures in Arduino is a book targeted for ages 11-15 teaching programming and electronics with the Arduino platform. It consists of 9 projects and incorporating paper craft and sewing with microcontroller programming.

Each project is accompanied by a tutorial video and the source code is available to download.

LV Hypercube

2014

Collaborators: Project led by Hellicar & Lewis in collarboration with Mule

Documentation: Hellicar & Lewis

The band LV collaborated with design studio Hellicar & Lewis on the image for their album, Islands. The imagery centred around hypercubes and I engineered an LED structure. The technology involved Fadecandy and Neopixels in a custom-built frame with 3D printed joints.

Memories for Futures 10

December 2013

Collaborator: Stefanie Posavec

Documentation: Blog post documenting the project

Stephanie Posavec was invited to contribute to the Futures 10 – the closing exhibition of the Wearable Futures Conference. She asked me to help bring her concept of a wearable piece off the page and into a physical prototype. The concept was necklace that could hold geo-tagged memories. The significance of the memory would be relayed through patterns and colour of light triggered when the wearer was near a point of interest.

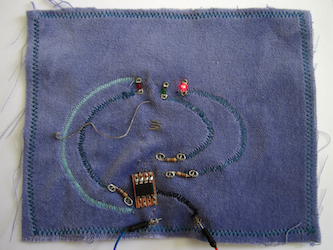

Excuse Me Swatch

July 2013

Documentation: Full swatchbook, my swatch

The eTextile Swatchbook is an exchange of swatches by artists, designers, engineers, and technologists working with eTextiles that demonstrates the latest developments in the field. My swatch for 2013 was a simple game to illustrate some slightly more advanced interactions with Arduino programming. The swatch is a one button game of timing. The goal is to hit the button when the green LED is lit.

Metaprojection Jacket

April 2013 Performance in South Tank of Tate Modern

Collaborators: Melissa Matos of TRUSST, Jaques Greene of Vase, Rad Hourani, Rachel Freire

Press: Dazed Digital

In a piece dreamed up by Melissa Matos, Jacques Greene performed in the South Tank of the Tate Modern while wearing a jacket with embedded cameras. The viewpoints of the cameras showing the crowd and his performance setup were projected onto the walls of the Tank.

The jacket was from Rad Hourani's collection and Rachel Freire assisted me with integrating the technology into the clothing. I engineered the system using hacked PS3 Eye cameras and openFrameworks.

GPS Shoes

First exhibited September 2012 at KK Outlet

Collaborators: Dominic Wilcox, Nicolas Cooper of Stamp Shoes

Selected Press: Dezeen, Gizmodo, NPR

Dominic Wilcox was commissioned to design a pair a shoes to guide you home. Nicolas Cooper of Stamp Shoes handmade the shoes and I designed the technology that was embedded into the shoes.

A pair of Arduinos that communicated over RF were placed in each heel. They each controlled the LEDs on the toebox of the shoe along with the left shoe communicating with a GPS. A reed switch and magnet placed near the inside of each ankle allowed the wearer to activate the GPS by clicking his heels together three times. The accompanying software was written in Processing.

Pig with Wigs

December 2011

Documentation: Blog posts Part 1, Part 2, Part 3 and Part 4

I've always loved the Mochi Mochi Land creations by Anna Hrachovec, so as a present for my sister, I adapted her pig with wigs pattern. I stuffed an Arduino and piezo inside the pig and created switches with snaps and the different wigs. I hand transcribed three pop songs to be sung by the pig with each one triggered by a different wig.

Table19 Christmas

November 2013

Client: Table19

Table19 wanted to create an installation outside of their office that the public could unknowingly wander into and interact with. Using Processing and a Kinect, I wrote a program that let you select the surfaces of objects that the Kinect camera could see and then attach sounds to those surfaces. The designers at Table19 made vinyl stickers that they placed on the pavement and then used the Processing program to attach a line from "We Wish You a Merry Christmas" to each sticker.

Chinese Character Tracing

2013

Client: British Museum Learning Department

Collaborator: Samuel Cox

The Learning Department at the British Museum had a special exhibit for the Chinese New Year and wanted children to try to draw the characters from the Chinese zodiac in an unconventional way.

I worked with interaction designer and technologist Samuel Cox. Samuel created an Arduino-driven RFID interface for the children to select the character using cards with an image of the animal. I wrote a Processing program to interface with the Kinect and Arduino.

Hacks

The following projects were conceived and executed within 24 or 36 hour periods at hack days and similar events.

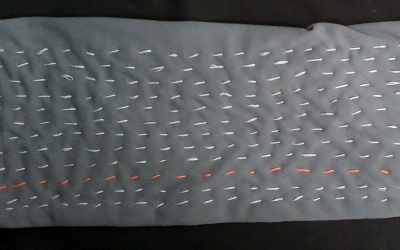

Project Jane

Event: Sonar Music Hack Day Barcelona 2015

The scarf is entirely fabric and thread, with the exception of a single hard circuit board in the centre. A USB micro plugs into the board connecting the board to a computer. The wearer can choose the gestures they would like to use – touching the scarf in different areas, poking it with one finger, or any other hand position that feels natural. A piece of software on the computer observes these different positions and learns them. The example application used at the Hack Day is a drum machine, but this is just a single use case. The wearer controls the drum machine playback by creating the hand positions that they just had the computer learn.

See my blog post for more videos and details.

Festival Bag

Event: Music Hack Day Midem 2015

The bag starts with an animation to indicate it’s waiting to be connected to a Bluetooth LE device. The phone can send messages to the bag and instruct it to light up for particular notifications. I had it light up as all red and all green as a demo. This could be a notification that you’ve received a text message or that a show you indicated you want to attend is about to start. It alerts you to look at your phone for more information. All the physical interactions with the bag use the tassels at the end of the drawstrings. Touch the pink tassel to the beadwork along the bottom of the to change the animation or to clear a notification and return to the animation.

See my blog post for more videos and details.

Wallify

Event: Music Hack Day Iceland 2012

Collaborators: Hakkavelin

I took meters of digitally addressable LED strip lights to the hack day without a project in mind. Working with two member of the local hackspace, Hakkavelin, we arranged the lights on a coat rack and diffused the lights with paper napkins. We wrote a serial interface using an Arduino to control the colors of the lights and I wrote a Processing program that queried the Echo Nest for artist images. When a desired image was selected, it was then displayed on the lights. There were issues with the color balance, but images with distinct lines and colors worked well.

See my blog post for more videos and details.

Badgify

Event: Music Hack Day Midem 2012

Collaborator: Suzie Blackman

There are numerous ways that you can go online and see what your friends are listening to, but it's much more difficult to passively find out what those physically located around you on the bus are listening to. Badgify was a small LCD that could be mounted on a bag to display what you are currently listening to. The LCD is powered by an Arduino which communicates via Bluetooth to an Android device which periodically updates the badge according to what you are scrobbling to Lastfm.

Suzie Blackman did the server side scripting and I did the hardware and Android programming.

See my blog post for more videos and details.

Kinect Killed the Video Star

Event: Music Hack Day London 2011

Collaborator: Adam Stark

This was one of my earlier Kinect pieces. Adam Stark and I created a real-time green scene-like video capture system using the Kinect. The background of the captured video that was not a person was automatically removed and replaced with a music video. The concept was that you could join your favorite music video.